Capturing Tactile Properties of Real Surfaces for Haptic Reproduction

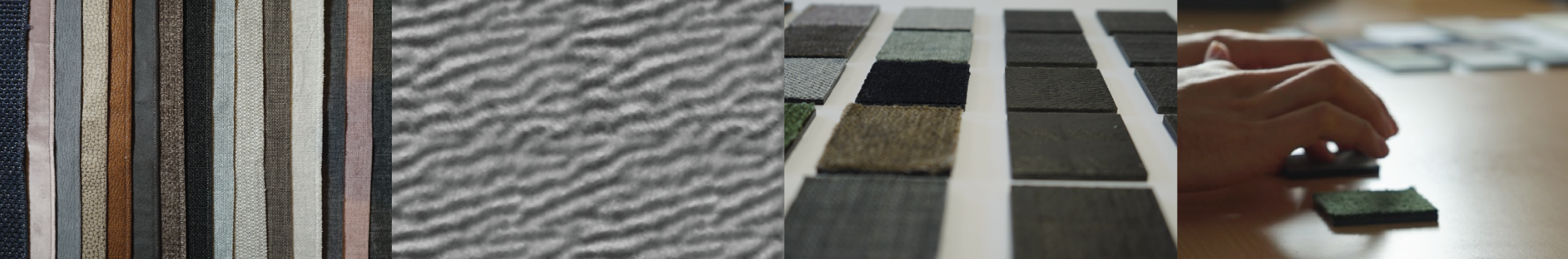

Tactile feedback of an object's surface enables us to discern its material properties and affordances. This understanding is used in digital fabrication processes by creating objects with high-resolution surface variations to influence a user’s tactile perception. As the design of such surface haptics commonly relies on knowledge from real-life experiences, it is unclear how to adapt this information for digital design methods. In this work, we investigate replicating the haptics of real materials. Using an existing process for capturing an object’s microgeometry, we digitize and reproduce the stable surface information of a set of 15 fabric samples. In a psychophysical experiment, we evaluate the tactile qualities of our set of original samples and their replicas. From our results, we see that direct reproduction of surface variations is able to influence different psychophysical dimensions of the tactile perception of surface textures. While the fabrication process did not preserve all properties, our approach underlines that replication of surface microgeometries benefits fabrication methods in terms of haptic perception by covering a large range of tactile variations. Moreover, by changing the surface structure of a single fabricated material, its material perception can be influenced. We conclude by proposing strategies for capturing and reproducing digitized textures to better resemble the perceived haptics of the originals.

Downloads

Citation

Donald Degraen, Michal Piovarči, Bernd Bickel, Antonio Krüger, Capturing Tactile Properties of Real Surfaces for Haptic Reproduction, Proceedings of the 34th Annual ACM Symposium on User Interface Software and Technology (UIST '21)@article{Degraen2021,

author = { Donald Degraen and Michal Piovar\v{c}i and Bernd Bickel and Antonio Kruger},

title = {Capturing Tactile Properties of Real Surfaces for Haptic Reproduction},

booktitle = {The 34th Annual ACM Symposium on User Interface Software and Technology},

series = {UIST '21},

year = {2021},

}